When I set out to write this blog I had a very modest goal: to publish one post every two weeks. And yet, for the duration of this exercise, I’ve been able to keep to this plan only once, missing my deadline by at least a day on every other occasion.

Being delayed on an inconsequential personal project is hardly surprising. What is surprising though is that even well-resourced, highly coordinated, and experienced teams working on critical projects – infrastructure development, government websites, space shuttle launches (stats) – are susceptible to the same missteps.

The primary force behind these misjudgments – introduced by Daniel Kahneman and Amos Tversky in their 1977 paper titled Intuitive Prediction: Biases and Corrective Procedures – is a tendency of human behavior called the planning fallacy. This, in turn, is affected by two different types of mental lapses: unrealistic optimism and blindness to base-rate information.

Unrealistic Optimism

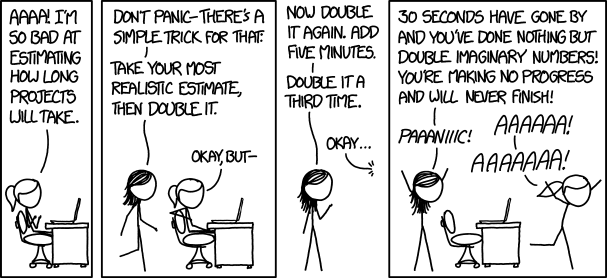

Optimism, in general, is a useful quality but left unchecked it can lead to trouble. Say you’ve been asked to estimate the time required to complete a task for a project you’re working on. Typically, you’ll start by finding a similar problem, identify the sub tasks that were involved, estimate the time for each of the sub tasks, and finally, add some buffer for unexpected changes. In this case, optimism for the sub tasks you know and understand will mislead you into underestimating the unknown sub tasks; the actual complexity of the unknown tasks will emerge only during implementation.

Neglecting base-rate information

A second reason is an exclusive reliance on the “inside view,” the assessment of the team assigned to the project, at the expense of the “outside view,” statistical information about how other teams fared on similar projects. In some cases, statistical information may not be available, but as Kahneman points out in Thinking Fast and Slow, even those with intimate knowledge of the outside view rarely take advantage of it; somehow, the projects we work on seem more novel than they really are.

People who have information about a particular case rarely feel the need to know the statistics of the class to which the case belongs.

Why does this matter?

Effective planning impacts all areas of our lives. While it’s important for employees and entrepreneurs alike to produce good quality work, it’s equally important to produce the work on time; habitual delays make one seem unreliable to managers and clients – a reliable way (no pun intended) to impede career and business growth. Poor planning can also spill over into personal life. In an effort to complete tasks on time, instead of fixing the idealistic schedule, we end up working weekends, missing out on family and friends, adding stress, and if done long enough, potentially even burning out.

Understanding how people plan is also useful when assessing plans presented to us. Say you’re working on a landscaping project and evaluating quotes from multiple vendors. It’s likely that you’ll see a wide range for estimated time and cost to complete the project (you do get quotes from multiple vendors, right?). Ordinarily, you would lean towards the bid with the shortest time to completion; time is money, after all. But this would almost always be the incorrect choice. One who understands the planning process – and our inherent biases – will always compare against completed projects before choosing.

The good news is that we can develop simple systems to mitigate planning errors since, as the authors point out, “errors of judgment are often systematic rather than random, manifesting bias rather than confusion.” In Thinking Fast and Slow, Kahneman refers to a forecasting method applied by Danish planning expert Bent Flyvbjerg:

-

Identify an appropriate reference class (kitchen renovations, large railway projects, etc.)

-

Obtain the statistics of the reference class (in terms of cost per mile of railway, or of the percentage by which expenditures exceeded budget). Use the statistics to generate a baseline prediction.

-

Use specific information about the case to adjust the baseline prediction, if there are particular reasons to expect the optimistic bias to be more or less pronounced in this project than in others of the same type.

On a related note, read Fred Brooks’ The Mythical Man-Month (essay 2, pg 13) for a detailed discussion on why software projects fail.